Audio and Multimedia

Multimedia content on the Web, by its definition - including or involving the use of several media - would seem to be inherently accessible or easily made accessible.

However, if the information is audio, such as a RealAudio feed from a news conference or the proceedings in a courtroom, a person who is deaf or hard of hearing cannot access that content unless provision is made for a visual presentation of audio content. Similarly, if the content is pure video, a blind person or a person with severe vision loss will miss the message without the important information in the video being described.

Remember from Section 2 that to be compliant with Section 508, you must include text equivalents for all non-text content. Besides including alternative text for images and image map areas, you need to provide textual equivalents for audio and more generally for multimedia content.

Some Definitions

A transcript of audio content is a word-for-word textual representation of the audio, including descriptions of non-text sounds like "laughter" or "thunder." Transcripts of audio content are valuable not only for persons with disabilities but in addition, they permit searching and indexing of that content which is not possible with just the audio. "Not possible" is, of course too strong. Search engines could, if they wanted, employ voice recognition to audio files, and index that information - but they don't.

When a transcript of the audio part of an audio-visual (multimedia) presentation is displayed synchronously with the audio-visual presentation, it is called captioning. When speaking of TV captioning, open captions are those in which the text is always present on the screen and closed captions are those viewers can choose to display or not.

Descriptive video or described video intersperses explanations of important video with the normal audio of a multimedia presentation. These descriptions are also called audio descriptions.

Section 508 Requirement for Transcripts

The availability of a transcript of audio content satisfies the requirement of the first Section 508 standard formulated by the Access Board.

§1194.22 (a)

A text equivalent for every non-text element shall be provided (e.g., via "alt", "longdesc", or in element content).

Sites with audio content that include transcripts meet the Section 508 standards.

For example, many of the segments of the News Hour from National Public Radio (http://www.pbs.org/newshour) include transcripts as illustrated by the following screenshot.

Transcript on NPR.org

Note that the transcript is not a separate file, it is just part of the page. As mentioned at the beginning of this section, transcripts of audio content are important in allowing your content to be searched and indexed.

The discussion included with the final standards from the Access Board is somewhat misleading.

The Board also interprets this provision [1194.22 (a)] to require that when audio presentations are available on a Web page, because audio is a non-textual element, text in the form of captioning must accompany the audio, to allow people who are deaf or hard of hearing to comprehend the content.

Since captioning of pure audio doesn't really make sense, we interpret the Access Board instructions as requiring transcripts of audio content.

Requirement for Captioning

A transcript of the audio component of multimedia content alone does not satisfy the Paragraph 1194.22 (b) of the Access Board's final standards.

§1194.22 (b)

Equivalent alternatives for any multimedia presentation shall be synchronized with the presentation.

Sections 1194.22 (a) and 1194.22 (b) taken together require that a multimedia presentation have its transcript of the audio synchronized with the presentation, that is, it must be captioned.

As a society, we are familiar with closed captioning on television because all television sets larger than 13" produced after 1995 had to have built-in caption decoders, and many television shows include closed captions, which you can view with a setting on your TV. Frequently we see them in noisy environments like airports or bars. There are many firms that specialize in captioning for TV, as a simple search on the Web will attest.

The picture is not so bright for captioning on the Web. When this course was first written, there was a worldwide TV network on the web for people with disabilities called ableTV which had captioned multimedia. But it is gone now. At that time (around 2001) I complained that I could not find a commercial firm that did captioning for the web. I was contacted by the folks at Closed Caption Maker (http://www.ccmaker.com/) of Baltimore, Maryland, who informed me that they had been in the business of providing closed captions for the web for ten years. Their web site includes an example of their captioning and they are refreshingly explicit about charges. A Google search for "captions web" now yields this site and a lots of others. Several sites make a point of the fact that Section 508 of the Workforce Rehabilitation Act requires that all training and informational video and multimedia productions which support a federal agency's mission, regardless of format, that contain speech or other audio information necessary for the comprehension of the content, have to be captioned.

SAMI

In the summer of 1998, Microsoft introduced Synchronized Accessible Media Interchange or SAMI for the purpose of adding closed captioning to multimedia content to be viewed with Microsoft Windows Media Player. Demonstrations and sample multimedia files are available at the SAMI site (http://msdn2.microsoft.com/en-us/library/ms971327.aspx). Some of the variations on closed captions are very interesting, for example, using highlighting in a transcript as a captioning display methodology.

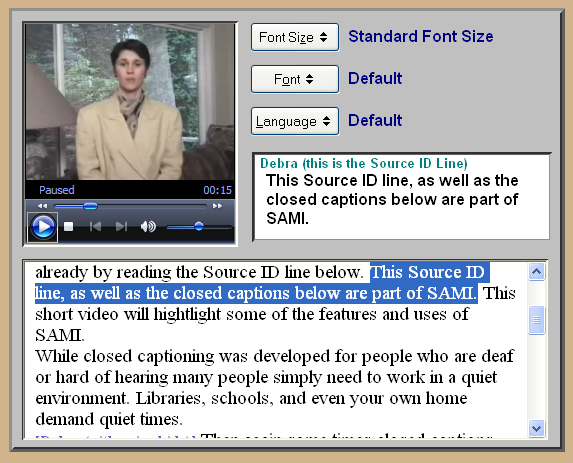

Here is an example from the SAMI site where the transcript is displayed in a window under the video, and the current text, that is the caption, is highlighted in the displayed transcript.

Using highlighting for captions with SAMI

SAMI is an HTML like language that provides "layout" of a multimedioa file, and that layout includes timing of the events for displaying or, in the example above highlighting the current caption. For web pages, you can view the source file, View > Source, or Source > View Source in the Web Accessibility Toolbar. I haven't found a comparable option when looking at multimedia players for viewing the source of SAMI or, as we will mention in a bit, SMIL files. SAMI files are needed for multimedia content played on Microsoft Windows Media Player.

SMIL

The W3C (http://w3.org) has defined a markup language that provides for captions. SMIL (pronounced "smile") stands for Synchronized Multimedia Integration Language. SMIL became a tecnnical recommendation of the W3C in 1998, http://www.w3.org/TR/REC-smil/. At this writing, the current recommendation is SMIL2.1 (http://www.w3.org/TR/2005/REC-SMIL2-20051213/) and SMIL 3.0 is in last call, http://www.w3.org/TR/2007/WD-SMIL3-20070713/.

It seems that you must use SMIL for RealPlayer and QuickTime, SAMI for Microsoft WIndows Medai PLayer.

Here is a screenshot of a video example (from the NCAM site, http://ncam.wgbh.org/richmedia/examples/62.html) with Captions.

RealPlayer with Captions

These are closed captions, you must let RealPlayer know that you want to see them - which is a task. If you can find the menus, use Tools > Preferences > Content and in the Accessibility section of the page, check both Use suppememtal text captioning if available and Use descriptive audio when available.

Closed captioning for TV was developed by the Corporation for Public Broadcasting television station WGBH in Boston (http://wgbh.org). Its Caption Center (http://main.wgbh.org/wgbh/pages/mag/services/captioning/), founded in 1972 played an instrumental role in the creation and passage of the Television Decoder Circuitry Act of 1990, the law that now requires built-in caption decoder circuitry in most new televisions. The Nation Center for Accessible Media, or NCAM (http://ncam.wgbh.org), at WGBH, is working on the issues of captioning for the Web - and certainly the best resource in this area.

The NCAM site (http://ncam.wgbh.org/richmedia/examples) includes a number of examples captioned and video described science clips.

NCAM has developed MAGpie, the Media Access Generator which is an authoring tool for making multimedia content accessible. With this freely downloadable, Windows-based authoring environment, developers can add captions in three formats: SAMI, QuickTime and RealText.

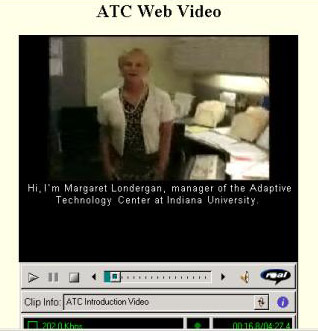

Captioned video from Indiana ATC using MAGpie

The Adaptive Technology Center (http://www.indiana.edu/~iubdrh/) of Indiana University, Bloomington, Indiana has a example of a captioned video produced with MAGpie (http://www.indiana.edu/~iubdrh/video.html).

The home of MAGpie is the NCAM Rich Media Project (http://ncam.wgbh.org/richmedia). This is a valuable resource for tools and samples of captioning of multimedia on the Web.

In October 2007, AOL, Google, Microsoft and Yahoo! joined together to ask that NCAM establish and support ICF, the Internet Captioning Forum to address the complex issues of captioning on the web.

Video Descriptions

Remember that video descriptions are audio descriptions of the events or settings on the video added to the audio track of a multimedia presentation. Comments like "Fred enters the room with a fearful look on his face" are interspersed between the normal audio segments, if possible. Such additional audio requires the remake of the audio track of the multimedia content. Unlike a transcript, video description also requires interpretation on the part of the audio describer, not translation. Was that fear on Fred's face? or anticipation?

Summary

To comply with the Section 508 Web Accessibility Standards, you must provide a transcript for any audio on your Web site. You must also provide synchronized audio (i.e., captions) for multimedia presentations. If there is crucial information that is only in the video of your multimedia content, then you must either provide audio descriptions or go back and make sure all crucial information is available in audio and captions.

If you have comments or questions on the content of this course, please contact Jim Thatcher.

This course was written for the Information Technology Technical Assistance and Training Center, funded in support of Section 508 by NIDRR and GSA at Georgia Institute of Technology, Center for Rehabilitation Technology.